Should a Self Driving Car Kill the Baby or the Grandma

In 2014 researchers at the MIT Media Lab designed an experiment called Moral Machine. The idea was to create a game-like platform that would crowdsource people'due south decisions on how self-driving cars should prioritize lives in different variations of the "trolley problem." In the procedure, the information generated would provide insight into the collective ethical priorities of dissimilar cultures.

The researchers never predicted the experiment's viral reception. Iv years after the platform went alive, millions of people in 233 countries and territories accept logged 40 one thousand thousand decisions, making it one of the largest studies ever done on global moral preferences.

A new paper published in Nature presents the analysis of that data and reveals how much cross-cultural ethics diverge on the basis of culture, economics, and geographic location.

The classic trolley problem goes like this: You run into a runaway trolley speeding down the tracks, about to hit and kill five people. You take access to a lever that could switch the trolley to a dissimilar track, where a different person would meet an untimely demise. Should you pull the lever and end 1 life to spare five?

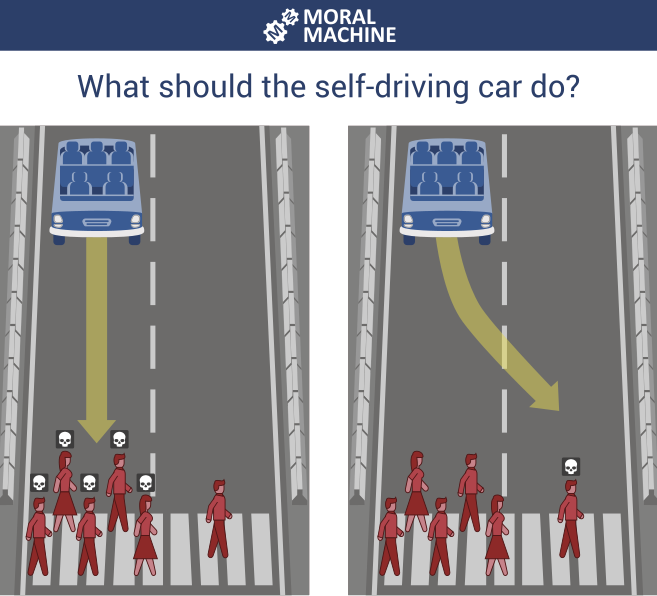

The Moral Machine took that idea to test ix dissimilar comparisons shown to polarize people: should a self-driving machine prioritize humans over pets, passengers over pedestrians, more lives over fewer, women over men, young over old, fit over sickly, college social status over lower, police force-abiders over law-benders? And finally, should the auto swerve (have activity) or stay on course (inaction)?

Moral Machine

Rather than pose i-to-one comparisons, nonetheless, the experiment presented participants with various combinations, such as whether a self-driving car should keep direct ahead to kill three elderly pedestrians or swerve into a barricade to impale three youthful passengers.

The researchers found that countries' preferences differ widely, merely they also correlate highly with culture and economics. For example, participants from collectivist cultures like People's republic of china and Nihon are less likely to spare the young over the old—perhaps, the researchers hypothesized, because of a greater emphasis on respecting the elderly.

Similarly, participants from poorer countries with weaker institutions are more than tolerant of jaywalkers versus pedestrians who cross legally. And participants from countries with a high level of economic inequality bear witness greater gaps betwixt the treatment of individuals with high and low social status.

And, in what boils downwardly to the essential question of the trolley problem, the researchers found that the sheer number of people in harm's way wasn't always the dominant factor in choosing which group should be spared. The results showed that participants from individualistic cultures, like the Britain and US, placed a stronger emphasis on sparing more lives given all the other choices—perhaps, in the authors' views, because of the greater accent on the value of each private.

Countries inside close proximity to ane some other as well showed closer moral preferences, with 3 ascendant clusters in the West, East, and Southward.

The researchers acknowledged that the results could be skewed, given that participants in the report were self-selected and therefore more likely to be net-connected, of high social standing, and tech savvy. But those interested in riding cocky-driving cars would exist likely to have those characteristics besides.

The study has interesting implications for countries currently testing cocky-driving cars, since these preferences could play a role in shaping the design and regulation of such vehicles. Carmakers may find, for instance, that Chinese consumers would more than readily enter a machine that protected themselves over pedestrians.

But the authors of the written report emphasized that the results are not meant to dictate how different countries should act. In fact, in some cases, the authors felt that technologists and policymakers should override the collective public stance. Edmond Awad, an writer of the paper, brought upward the social status comparison as an example. "It seems concerning that people found it okay to a significant caste to spare higher status over lower status," he said. "It'due south of import to say, 'Hey, we could quantify that' instead of proverb, 'Oh, maybe we should use that.'" The results, he said, should exist used by industry and government equally a foundation for agreement how the public would react to the ethics of dissimilar design and policy decisions.

Awad hopes the results volition also help technologists recollect more deeply about the ideals of AI beyond self-driving cars. "We used the trolley problem because it'due south a very skillful style to collect this information, but we promise the word of ethics don't stay within that theme," he said. "The discussion should move to risk assay—about who is at more risk or less hazard—instead of saying who'due south going to dice or non, and besides most how bias is happening." How these results could translate into the more upstanding pattern and regulation of AI is something he hopes to study more than in the future.

"In the terminal two, three years more people have started talking virtually the ideals of AI," Awad said. "More than people accept started becoming aware that AI could have different upstanding consequences on different groups of people. The fact that we encounter people engaged with this—I call back that that's something promising."

andersonithey1965.blogspot.com

Source: https://www.technologyreview.com/2018/10/24/139313/a-global-ethics-study-aims-to-help-ai-solve-the-self-driving-trolley-problem/

0 Response to "Should a Self Driving Car Kill the Baby or the Grandma"

Post a Comment